From vacuum tubes to words

The structural evolution of how we instruct computers

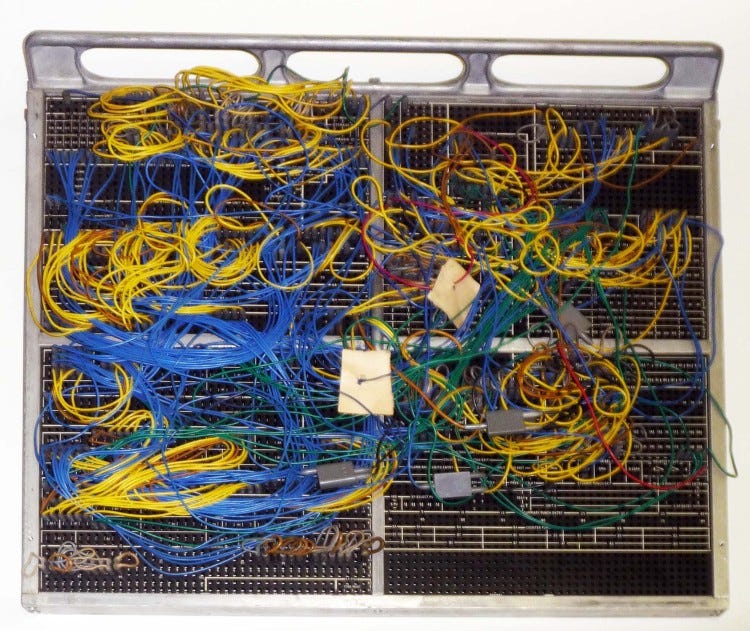

The first computers were single-purpose machines. The program was built directly into the hardware, where components were wired into a single, unified circuit. In essence, the hardware was the software.

This approach to building computers was time-consuming and inefficient because a logical error was usually an error in construction. In the best case, you could discover a problem by double-checking the circuit against the schematic before plugging the machine into the outlet. In the worst case, you’d start the machine and it would die instantly in an electrical fire.

Programmable computers were the solution to this problem. The idea was to build a machine that doesn’t execute a single fixed task but can be instructed what to do after it is built. How this idea evolved into practice is a story of both technological innovation and conceptual breakthroughs.

At first we had plugboards.

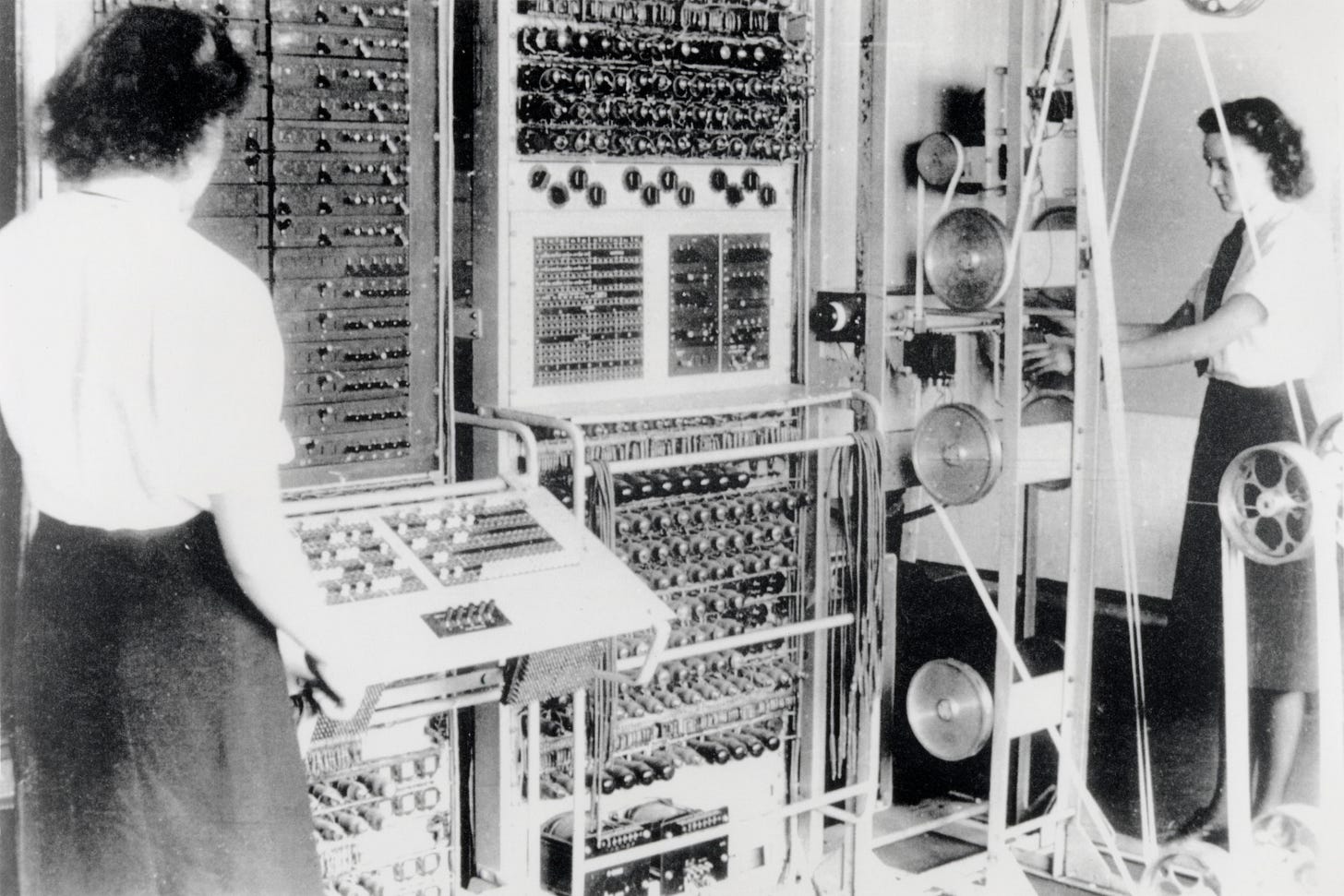

One of the first programmable computers was Colossus, developed by the British during World War II to break German encryption codes. While operators could reprogram the machine by changing switches and rewiring connections, they could not reconfigure the machine to run any possible program. At most, they could solve different problems relating to codebreaking. 1

Structurally, there is no distinction between the program and the machine.

“Reprogramming” was a laborious process, starting with flowcharts and paper notes, followed by detailed engineering designs, and then the often-arduous process of physically re-wiring and re-building the machine. 2

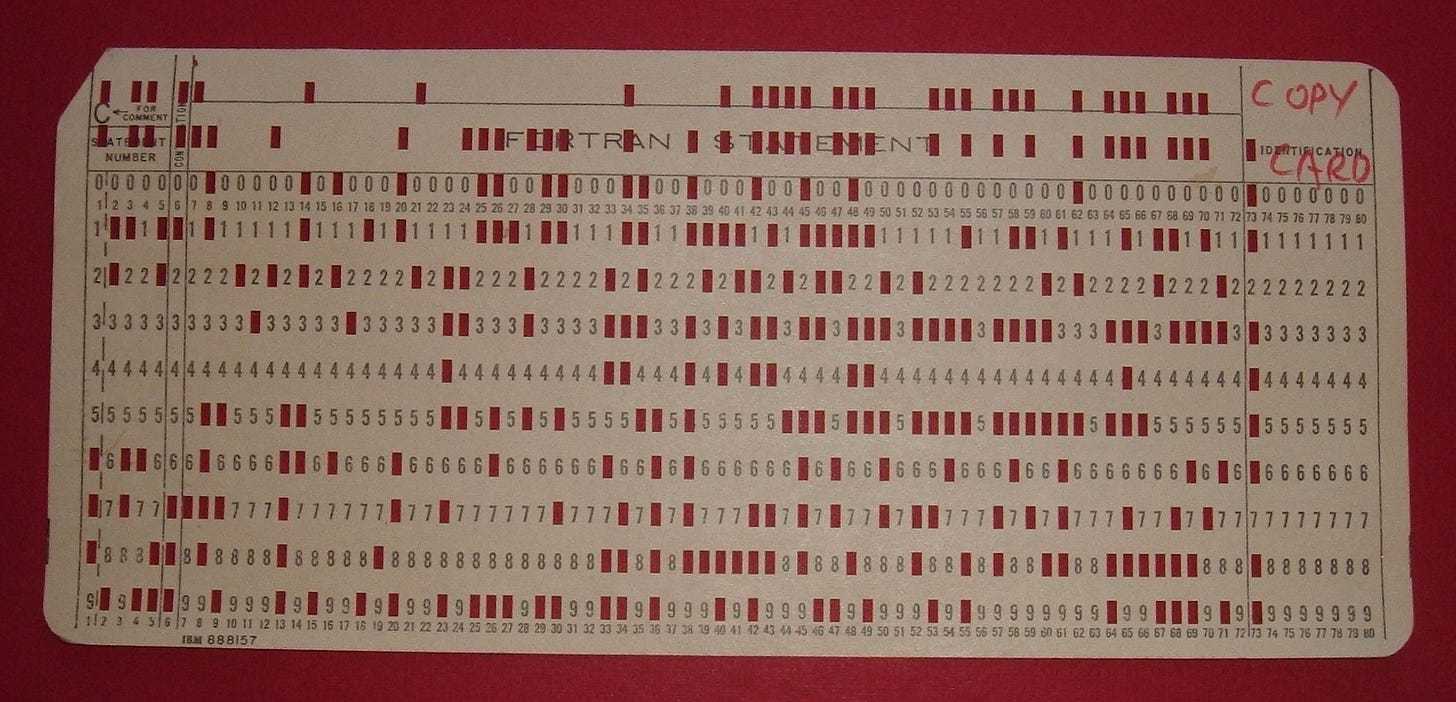

Then we had punched tapes and cards.

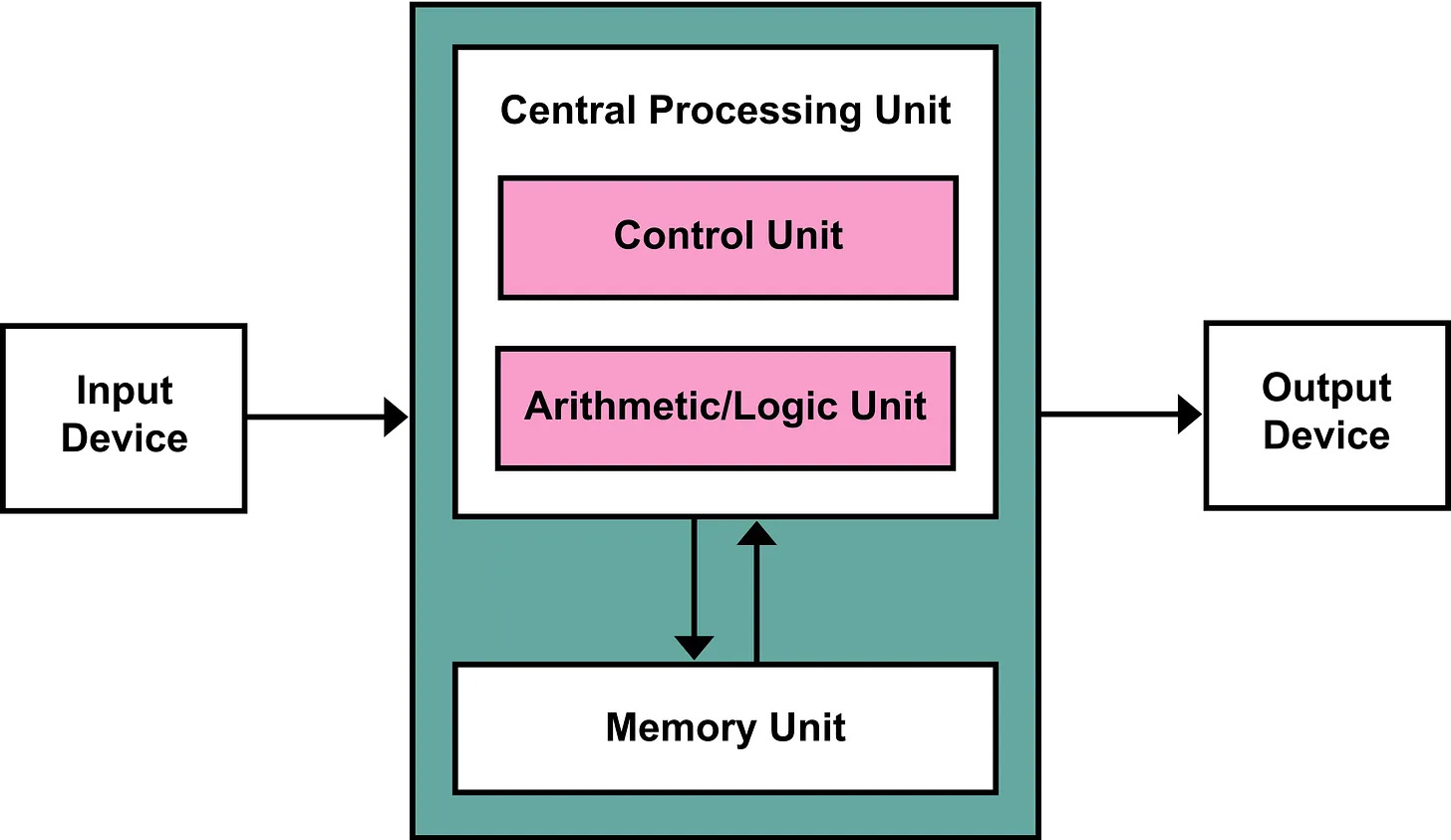

In order to build a computer that could change its function over time without needing physical redesign, the stored-program computer was invented. The idea was to store the program, i.e. a list of instructions, into a separate storage device or medium that could be plugged into and out of the computer. The machine would read and execute each instruction in sequence.

EDVAC, built in the late 1940s, was one of the first computers designed around this model and helped establish the blueprint for general-purpose computing.

The basic architecture of this machine is known as the “von Neumann architecture” after researcher, John von Neumann, who described it. The von Neumann architecture serves as the basic blueprint for almost every modern computer.

Structurally, the program is now separate from the machine.

For the first time, the program existed independently from the computer it ran on. This separation allowed hardware and software to evolve independently. This conceptual freedom would be the basis for all future software innovations.

Then we had assembly language.

With the invention of stored-program computers, we needed a way to write programs for them. These computers included in their design an instruction set: a formal specification for all the mathematical/machine operations they could execute along with the binary encoding for each instruction.

Programming in raw machine code, i.e., long sequences of 1s and 0s, was painfully slow and error-prone. A textual representation of machine instructions was introduced to make writing and reading programs easier (along with dedicated machines that would take program text as input and produce punched tapes or cards as output).

Let’s use the implementation of a stack as a working example:

; stack with max

; layout in memory:

; [size][storage[0]][storage[1]]...[storage[99]]

; ------------------------------------------------------------

; input is stack pointer in rdi

stack_init:

mov qword [rdi], 0 ; set size = 0

ret

; ------------------------------------------------------------

; input is stack pointer in rdi, value in rsi

; output is 1 if success, 0 if full in rax

stack_push:

mov rax, [rdi] ; load size

cmp rax, 100 ; compare size to maximum capacity

jge return_zero ; jump if greater or equal

mov [rdi + 8 + rax*8], rsi ; store value at storage[size]

inc rax ; increment size

mov [rdi], rax ; store size

mov rax, 1 ; return success

ret

return_zero:

mov rax, 0 ; return failure

ret

; ------------------------------------------------------------

; input is stack pointer in rdi

; output is popped value or 0 if empty in rax

stack_pop:

mov rax, [rdi] ; load size

cmp rax, 0 ; compare size to 0

je return_zero ; jump if equal

dec rax ; decrement size

mov [rdi], rax ; store size

mov rax, [rdi + 8 + rax*8] ; load value from storage[size]

ret

; ------------------------------------------------------------

; input is stack pointer in rdi

; output is maximum value in stack, or 0 if empty in rax

stack_max:

mov rcx, [rdi] ; load size

; Check if empty

cmp rcx, 0 ; compare size to 0

je return_zero ; jump if equal

mov rax, [rdi + 8] ; max = first element

mov rbx, 1 ; counter = 1

cmp rcx, 1 ; compare size with 1

je done ; jump if equal

loop:

mov rdx, [rdi + 8 + rbx*8] ; Load storage[counter]

cmp rdx, rax ; Compare with current max

jle skip ; jump if less or equal

mov rax, rdx ; update max

skip:

inc rbx ; increment counter

cmp rbx, rcx ; compare counter with size

jl loop ; jump if less

done:

retAssembly is a barely human-readable format by today’s standards. Due to memory constraints at the time, each instruction has a short mnemonic instead of a descriptive name to save characters: ret is “return”, inc is “increment”, jle is “jump if less or equal”, etc. Instructions can also have arguments: mov rax, rdx being “copy the value stored in the rdx register into the rax register”. It is legible once you learn all the cryptic names but it’s unwieldy.

The key characteristics are:

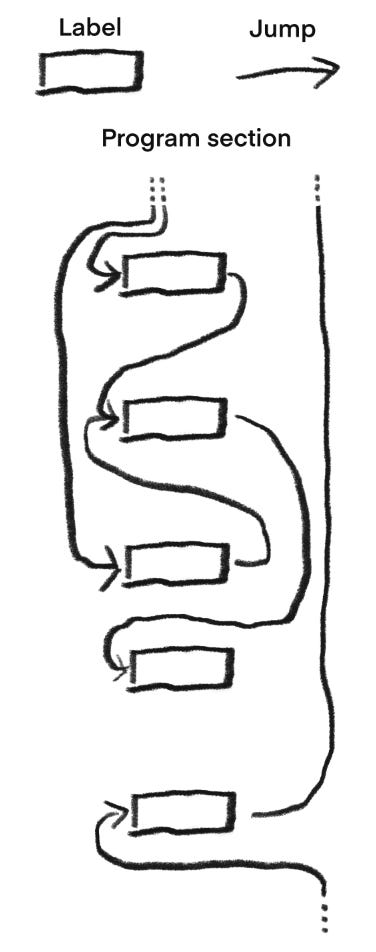

Jumps are the only way to control execution flow

The programmer manages processor memory (registers) explicitly

Labels are just addresses to jump to

No enforced distinction between code and data

Structurally, the program is a flat sequence of instructions.

Every instruction exists at the same level, connected only by jumps that send execution from one line to another. The program's structure is whatever the programmer imposes through convention and discipline. What looks like a function (stack_push, stack_pop) is merely a comment, a name preceding a block of instructions with nothing preventing execution from entering in the middle or treating its internal labels as entry points.

Then we had structured programming languages.

Assembly made the separation between software and hardware practical, but that separation was thin. Writing assembly still demanded a machine-centric mental model. The programmer had to think like the computer, not like a human solving a problem.

Now with the software fully separated from the hardware, major leaps going forward would be conceptual, relating to how we think about programs and the abstract components that form them rather than concrete details relating to computer construction.

Structured programming emerged in the 1960s as a discipline for writing programs whose structure mirrored the logic they expressed. Instead of thinking in terms of registers and memory addresses, programmers could think in terms of variables and logic. Instead of weaving together jumps to create loops and conditionals, they could use blocks and statements as built-in constructs. Instead of relying on convention to keep programs correct, they could rely on rules enforced by the language itself.

Let’s look at the same stack implementation, now written in C:

#include <errno.h>

#define MAX_CAPACITY 100

typedef struct {

int size;

int storage[MAX_CAPACITY];

} stack_t;

void stack_init(stack_t *s) {

s->size = 0;

}

int stack_push(stack_t *s, int value) {

if (s->size >= MAX_CAPACITY) {

errno = ENOBUFS;

return 0;

}

s->storage[s->size] = value;

s->size += 1;

return 1;

}

int stack_pop(stack_t *s) {

if (s->size == 0) {

errno = ENODATA;

return 0;

}

s->size = s->size - 1;

return s->storage[s->size];

}

int stack_max(stack_t *s) {

if (s->size == 0) {

errno = ENODATA;

return 0;

}

int max = s->storage[0];

for (int i = 1; i < s->size; i++) {

int current = s->storage[i];

if (current > max) {

max = current;

}

}

return max;

}I used this stack to implement a command line calculator that uses post-fix notation. You can see the generated assembly and run the program with different inputs here.

What were once comments explaining the logic in assembly have become the code itself. A program called a compiler translates this human-readable text into the machine code that the processor understands, freeing the programmer from having to manage the low-level details.

The key characteristics are:

No arbitrary jumps:

Control flow is now expressed through blocks/statements which are reusable and composable:if-then-else,do,while,for, etc…Variables:

The programmer no longer manages registers explicitly. We focus on logical pieces of data addressed by symbolic names. The compiler maps these variables to registers and memory locations automatically.Scope:

Variables are confined to blocks. You can’t access data outside the scope it is defined in. You can’t read a loop counter after the loop finishes. This eliminates whole classes of bugs which were easy to make by accident in assembly.Functions:

Sections of logic meant to be reusable can be packaged into a named block that accepts inputs and produces outputs. A function is a contract: give it these arguments, and it returns this result. The implementation details may be visible, but they are not directly accessible. You don't need to know howstack_maxcomputes the maximum, only that it does. Functions become the building blocks of larger programs, tested once and used everywhere.Types:

Data that is logically related can be grouped together. An integer is not just 64 bits, it’s a number you can add, subtract, and compare. A character is not just a byte, it’s a letter you can print and capitalize. Types give meaning to raw memory. The compiler ensures you don’t accidentally add a name to a bank balance or treat a temperature as a loop counter. What was once a convention becomes an enforceable rule.

All of these are voluntary constraints. We gave up the freedom to jump anywhere, to address memory directly, to treat all data as interchangeable bits. In exchange, we gained programs that are comprehensible, composable, and, for the first time, provably correct in ways the compiler could enforce.

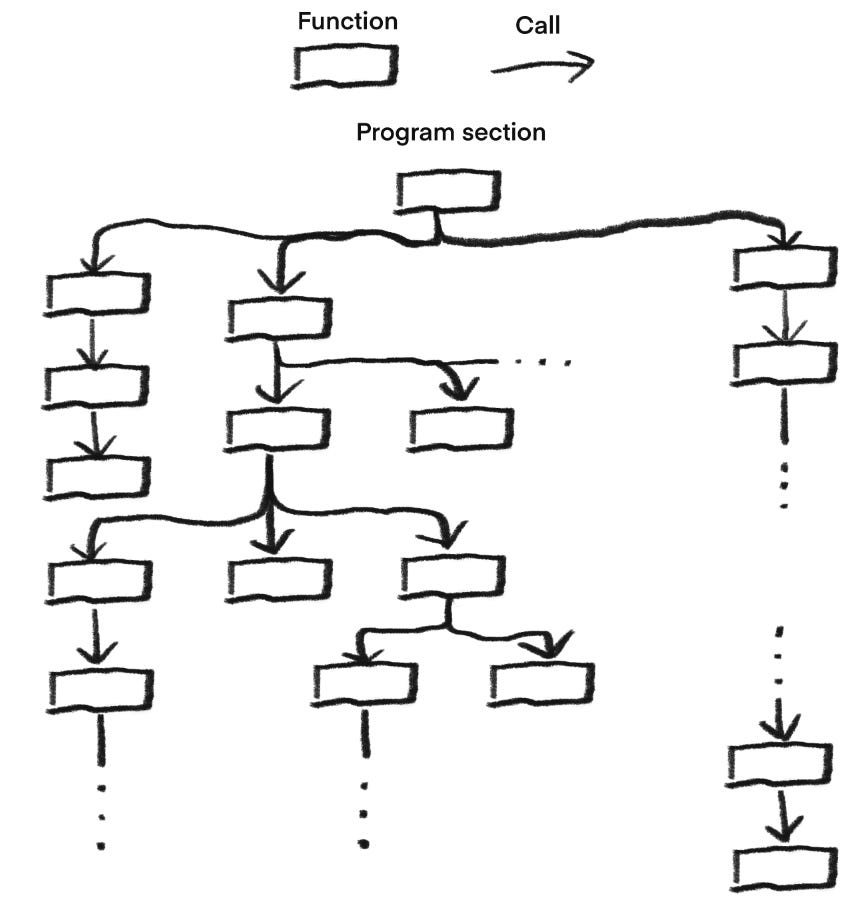

Structurally, the program is a hierarchy.

A function contains statements; a loop contains a block; a conditional contains branches (also blocks). This hierarchy mirrors the logic of the computation itself. You can read a well-written structured program from top to bottom and understand what it does, because the structure of the text reflects the structure of the process.

Then we had objects.

As software grew larger and more complex, a new problem emerged: managing complexity at scale. The technical problems that were threatening big projects were:

State and behavior were separated.

In structured programming, data structures and the functions that operated on them lived apart. There was no mechanism to tie them together. If you had astackdata structure, any function in the program could access its internal array, whether it was supposed to or not. The compiler could not enforce which functions were legitimate users of thestack. You relied on convention, documentation and discipline to keep the system consistent. As programs grew, conventions broke down.Code and data proliferated together.

As systems expanded, the number of functions and data structures grew in parallel. A new kind of resource required both a data structure to hold its state and a set of functions to operate on it. There was no way to package these together as a single unit. The global namespace became crowded with prefixes and naming schemes meant to indicate what belonged to what.Change was difficult to isolate.

When you needed to change how a data structure worked (replacing the internal storage of astackfrom an array to a linked list) you had to find every function that accessed it. Some of those functions might be scattered across thousands of lines of code, written by different people at different times. Changing one part of a program risked breaking unrelated parts.The program’s structure did not reflect the problem’s structure.

When modeling a real-world system: a bank, a factory, a city, you had to translate your mental model of accounts, customers, and transactions into a collection of data structures and functions. The connection between the problem and the code was loose. Someone reading your code would see arrays and loops, not accounts and customers. The gap between the problem domain and the implementation made programs harder to understand, modify, and communicate to others.

These were not failures of discipline but failures of abstraction. The tools available to programmers did not match the structure of the problems they were solving.

The next leap was object-oriented programming (OOP). Its origins lie in two separate domains3 that converged in the late 1960s and it addressed these problems by bundling state and behavior together into a single unit: the object. An object contains both the data that represents its state and the functions (called methods) that operate on that data. This shift solved several problems at once:

Encapsulation:

The data inside an object is protected. Only the object’s own methods can modify it. The compiler enforces this.Modularity:

Objects become independent units that can be developed, tested, and maintained in isolation. AStackobject contains everything needed to manage its collection of items in the right order. Its internal implementation can change without affecting the rest of the program, as long as its interface remains the same.Fidelity to the problem domain:

Objects can directly represent the concepts in the problem being solved. A banking system hasAccountobjects,Customerobjects, andTransactionobjects. The code mirrors the mental model.Extensibility:

New kinds of objects can be defined by extending existing ones. ASavingsAccountis anAccount, it inherits the behavior of a generic account while adding its own specialized rules. This allows programs to grow by adding new types without modifying existing code.

Let’s update our stack implementation:

#include <stdexcept>

#include <vector>

#include <ranges>

#include <algorithm>

class Stack {

private:

std::vector<int> storage;

public:

Stack() = default;

void push(int value) {

storage.push_back(value);

}

int pop() {

if (is_empty()) {

throw std::underflow_error("Stack underflow: cannot pop from empty stack");

}

int value = storage.back();

storage.pop_back();

return value;

}

int max() {

if (is_empty()) {

throw std::underflow_error("Stack underflow: cannot get max from empty stack");

}

return std::ranges::max(storage);

}

int size() const {

return storage.size();

}

bool is_empty() const {

return storage.empty();

}

};You can find the updated version of the example program here.

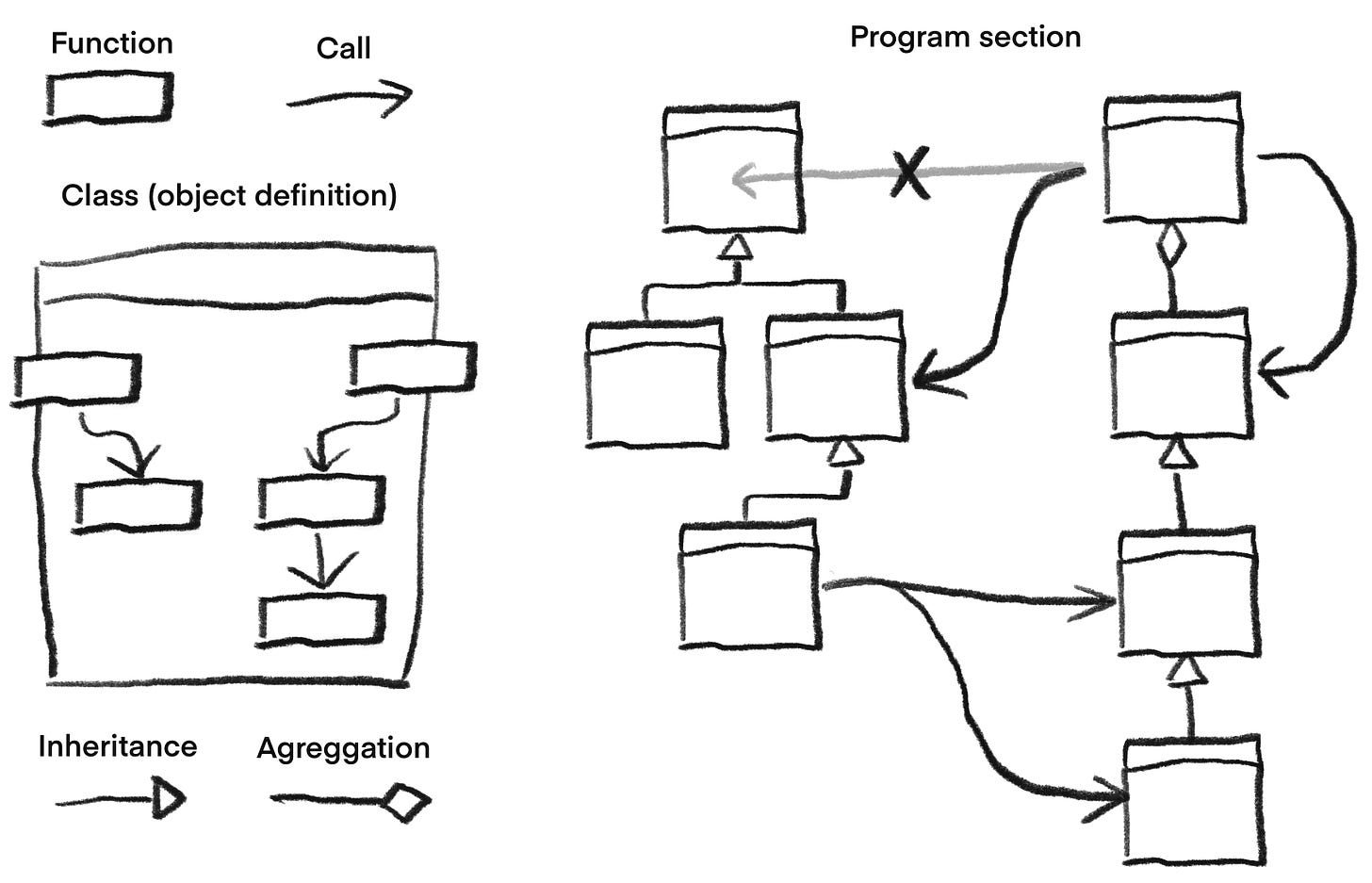

Structurally, the program becomes a collection of independent hierarchies.

Structured programming organizes code into a single hierarchy of nested blocks (functions containing loops, containing conditionals, and so on) that describe step-by-step execution of the program. OOP allows us to break a system into multiple such hierarchies, which we can individually focus on. With data and logic coupled, each hierarchy can represent both behavior and relationships between data.

A banking system might have an accounting hierarchy: SavingsAccount and CheckingAccount both inherit from Account, each adding specialized behavior. A user interface might have a hierarchy of visual elements: Button, TextField, and Slider all inherit from Widget, each implementing its own rendering logic. These hierarchies exist side by side, isolated from one another. The Account hierarchy does not need to know about the Widget hierarchy, and changes to one do not affect the other. Complexity is not eliminated, but it is contained.

Within each hierarchy, inheritance provides a way to express commonality while isolating variation. The shared behavior lives at the root, and each specialized type only adds what makes it different. This allows complexity to be broken down into manageable pieces, each piece a self-contained tree of related concepts.

This model mirrors how complex systems are organized in the real world.

This is where we are today (for the most part).

OOP is the dominant paradigm in the software industry and is the latest evolution in the imperative style, the history of which was succinctly covered in this article.

Functional programming was also developed as a different approach to managing software complexity and exists alongside all the ideas discussed here. I plan to cover programming paradigms broadly and functional programming specifically in future articles.

For further reading, I discuss the current state of the software engineering field and OOP’s role in it in this article: Why there’s no programming bible.

Omissions

The history described previously is not clear cut. All innovations form a spectrum, with transitional forms bridging each conceptual leap with lots of overlap.

Between assembly and high-level languages came macro assemblers. These allowed programmers to give symbolic names to values and to define macros: named sections of code that could be reused by typing the macro name instead of copying the text. This provided rudimentary abstraction while still working directly with registers and memory addresses.

Note: The assembly implementation of the stack previously shown uses macros. It is not pure assembly.

Between assembly and structured programming came languages like Fortran (1957) and COBOL (1959). Fortran introduced variables, arrays, and formulas that resembled mathematical notation, but it still relied heavily on goto statements (jumps) for control flow. In its initial version, it lacked the block structures, scoping rules, and loop constructs that would later define structured programming. These languages made programming more accessible but did not yet provide the discipline that structured programming would enforce.

Note: For the sake of brevity I conflate structured and procedural programming under the umbrella of structured programming.

Before the first fully object oriented language: Simula (1967), there were languages like ALGOL (1960), which Simula is an approximate superset of. Later languages like C++ (1985) allowed both object oriented and procedural styles.

There are a lot of transitional and hybrid forms in the taxonomical tree of programming languages.